We stare at screens ALL DAY. We accept the color as normal. But the way screens have historically produced color and the way humans actually perceive color has never really been the same thing.

Enter: OKLab.

OKLab is a uniform perceptual color space. Perceptual in that it’s specifically designed to align the digital expression of color with how we actually perceive color. The name (OK) is intentionally modest. Not perfect, just consistent, usable, and aligned with human perception. But to me, it looks like the color innovation we need today. For people and machines.

This color space isn’t brand new, it was released in 2020. And this issue of machine production vs. human perception has been known for a long time.

People have been trying to solve for perception for 30+ years, and this color space is the closest and easiest to use so far. It’s increasingly accessible, but still far from widely adopted.

Color for People

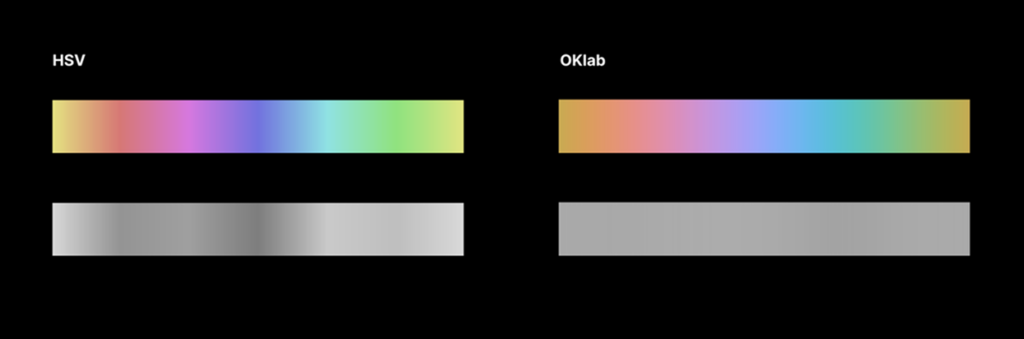

The color profile is fundamentally different from its RGB predecessors. It’s non-linear, like we are.

It reshapes the math behind color so that differences behave more like we perceive them: nonlinear, uneven, and relational. The result? Color transitions that feel consistent, color that holds its character, color that behaves the way humans expect it to–agnostic of device.

Color for machines does not equal color for people.

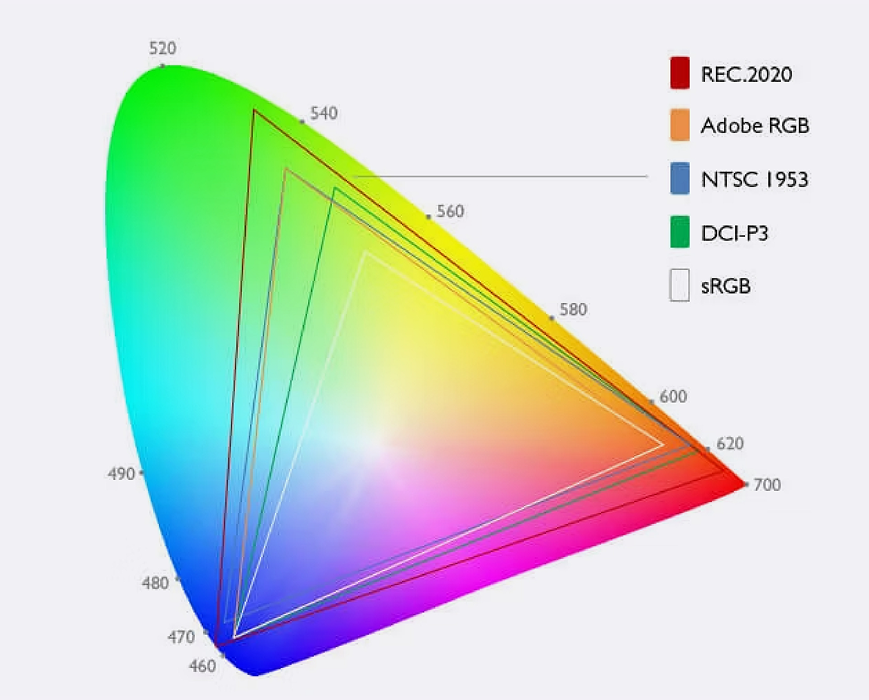

Color scientists recognized the need for calculable, predictable aesthetic solutions and started aiming for consistency beyond what the devices alone produced long before iPhones. As devices became a consistent category, we needed methodology to produce consistent color.

And it led to compromise. A dependable intersection of color science and tech left some serious gaps. Color for machines does not equal color for people.

Taking a human-centered approach brings us back to reimagining and recalculating geometric space with people in mind. That results in redefining how color systems are created, how light is used in 3D space, and how math is leveraged to create consistency across these formulas.

The gap between human and machine color used to be a designer’s problem. Now it’s everyone’s.

Color for AI (and People)

AI generates images mathematically. Color systems shape the output. As more of our visual world is generated this way, the quality of the system matters more than ever.

OKLab is already reshaping how color works in Photoshop, browsers, and game engines. And it’s making its way into AI image generation too, as a perceptual processing layer. It doesn’t replace RGB because it can’t; our screens still require the old system. So it operates in the middle.

RGB goes in, OKLab does the perceptual heavy lifting, RGB comes back out for display. A user-centric reframing not only takes the difference between coordinates A and B, it also makes the result feel more human.

Image: Wikipedia

And that starts to approach what Alan Turing hinted at. It’s not whether a machine is intelligent, but whether its output is indistinguishable from a human’s.

“A computer would deserve to be called intelligent if it could deceive a human into believing that it was human” – Alan Turing

Kind of scary, but it’s less about replacing people and more about arming them with the right systems to do their best work no matter the tool.

If your brand’s visual identity is increasingly being generated, interpolated, or scaled by AI (and let’s be honest – it is), the color system underneath the output is shaping your brand whether you’re thinking about it or not.

Better alignment between math and perception brings that indistinguishable output closer – into every Midjourney export and every Weavy visual workflow.

Pro Tip: Start playing with palette creation according to the new (old) world order. Have fun tweaking “chroma” and pretend RGB never existed.

Perception is Uniquely Human

We’ve gotten better at modeling color. Now it’s time to get better at using it. And perception has always been human. So get out there and clean up those gradients!